A binary symmetric channel is an approximation of a form of noise that is often observed in a communication channel. As individual bits are transmitted, we assume that there is some fixed, usually very small, probability

\(p\) that the value of the bit will switch, creating an error. If

\(N\) bits are to be transmitted, this is a

\(B(N,p)\) binomial process.

Suppose that 1024 bits are to be transmitted across a binary symmetric channel with \(p=0.002\text{.}\) For any single bit, the likelihood of an error is quite small, but we can compute the probability of having any number of errors in the full transmission. If there are to be \(k\) errors, then the number positions of those errors among all of the bits is \(\binom{1024}{k}\text{.}\) The probability of any single error pattern is \((0.002)^k \cdot (0.998)^{1024-k}\text{.}\) The product

\begin{equation*}

\binom{1024}{k} (0.002)^k \cdot (0.998)^{1024-k}

\end{equation*}

is then the probability of exactly \(k\) transmission errors.

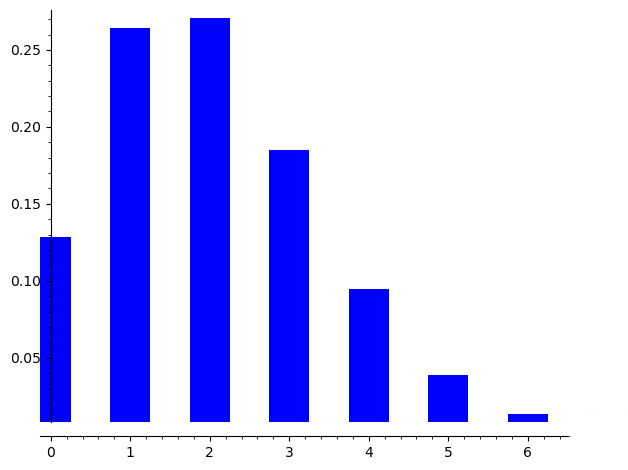

Here is a plot of these probabilities for

\(k \leq 6\)

Notice that the probability that there is no error among all bits is approximately

\(0.13\) and it is more likely that there are 1, 2 or 3 errors in the data that is received. These error could be costly and motivates the topic of

coding theory.