(suggested by Kaily Burke) Consider a citywide bike share program with three stations A, B, and C. Bikes borrowed from one station may be returned to any station by the end of each night. Assuming all bikes borrowed on a certain day are returned at night, we observe from past data the following probabilities of each bike’s subsequent state:

-

From Station A:

\(30\%\) of bikes return to Station A,

\(50\%\) return to station B,

\(20\%\) return to Station C.

-

From Station B:

\(10\%\) return to station A,

\(60\%\) to station B,

\(30\%\) to station C.

-

From Station C:

\(10\%\) return to station A,

\(10\%\) to station B,

\(80\%\) to station C.

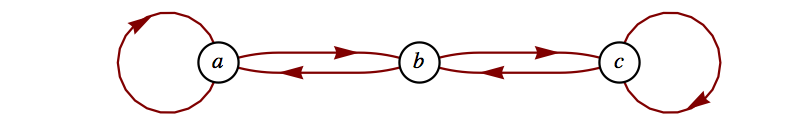

Unlike the tennis example, this process every state can be reached from every other state. These are called transition states. The natural question to ask here is whether the distribution of bikes tend toward a steady state. This, in fact, is true. No matter where a bike starts, the probability that it is in any of the three stations will be largely independent of the starting state.

The transition matrix for this Markov chain with the ordering of states A, B, C is

\begin{equation*}

B=\left(

\begin{array}{ccc}

0.3 & 0.5 & 0.2 \\

0.1 & 0.6 & 0.3 \\

0.1 & 0.1 & 0.8 \\

\end{array}

\right)

\end{equation*}

If we compute the seventh power of this matrix, we get the probabilities after a week. For example, the first row give the probabilities of being in states A, B and C after a week if the initial state is A. Notice that these probabilities are not very different if the initial state is B or C.

We don’t need to perform these calculations to get exact steady-state probabilities. We need only look to the one of the left eigenvectors of the transition matrix, as indicated in the following theorem, which we state here without proof.

Theorem 12.5.4.

Given a square matrix

\(T\) with nonnegative entries having the property that the row sums all equal to one, then one of its left eigenvalues will be

\(1\) and there exists an left eigenvector with nonnegative entries.

We first examine the left eigenvectors of our matrix and see that 1 is first eigenvalue and it has an eigenspace of dimension one and a basis vector having positive entries.

We extract the first eigenvector and divide by the sum of its entries to get a probability vector. Recall that SageMath objects are indexed starting a 0.

We can verify that if the probabilities are in equilibrium - they stay the same if we apply them to the transition matrix.